A dynamic, lightweight, and fast repository-based .Net ORM Library.

Package: https://www.nuget.org/packages/RepoDb

Project: https://github.com/RepoDb/RepoDb

Documentation: https://repodb.readthedocs.io/en/latest/

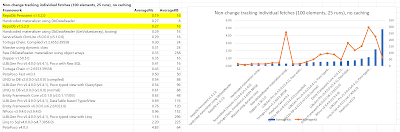

RepoDb v1.5.2 Result:

Individual Fetches:

Set Fetches:

RepoDb Performance

Last July 2018, I have posted an initial thread for RepoDb at Reddit and claiming that our library is the fastest one. The thread can be found here (https://www.reddit.com/r/csharp/comments/8y5pm3/repodb_a_very_fast_lightweight_orm_and_has_the/).

Many redditors commented and exchanged words with us about the library, specially with its performance, stability, purpose, features, syntax, differentiator etc etc.

We know that our IL is very fast, and that's true that RepoDb was the fastest in a big-mapping objects (of like 1 million rows). However, the community suggested to test the library using the existing performance bench(ers) that is commonly used by the community to actually test the performance of the ORM library.

With this, we used the benchmarker tool of FransBouma to test how's the performance of our library when compared to other.

Initial Performance Test Result with FransBouma's Tool

It was a shame to us claiming that RepoDb was the fastest ORM .Net Library. It was personally my fault for not executing the proper benchmarking when it comes to performance before it to everyone.

The reason why RepoDb was slow in the result above, was because the performance benchmark tool are using the iterative ways to compare the performance of every ORM. During our development, we never considered this approach.

The version of RepoDb by this time was v1.2.0.

Improving the Performance of IL

First, we analyze the cause of the performance flaws, whether it is the IL or the actual reflection procedure we had. In the beginning, I saw that I did not cache the IL statically, though I am caching it in as per-call basis.

With this, we first cache the IL statically by adding this logic.

First logic:

After logic:

Code Level:

Created a new class named DelegateCache.

public static class DelegateCache

{

...

}

Actual class can be found here (https://github.com/RepoDb/RepoDb/blob/master/RepoDb/RepoDb/Reflection/DelegateCache.cs).

In our class DataReaderConverter, we used the the newly created class above to get the corresponding delegate for our data reader's (it is a pre-compiled IL-written delegate).

The approach above significantly improve the performance of RepoDb, however, we still have the flaws when it comes to memory usage. We are aware that we are heavy with the C# reflection.

Improving the Performance of Reflection

Secondly, we targetted to cache the reflected objects. We aim to make sure that we only call the typeof(Entity).GetProperties() once all throughout the lifetime of the library, per class level.

What we did is we introduce a class named PropertyCache to cache the call per class. Secondly, we added class named ClassExpression to pre-compile the GetProperties() operation via Expression Lambda so the next time we call it, it is already compiled.

public static class PropertyCache

{

public static IEnumerable<ClassProperty> Get<TEntity>(Command command = Command.None)

where TEntity : class

{

...

}

}

We also created a class named ClassProperty that will contain a PropertyInfo object and necessary properties and methods to cache the definition of the property. We implemented the IEquatable<ClassProperty> to make sure that the collection objects can maximize the performance of the comparison.

public class ClassProperty : IEquatable<ClassProperty>

{

...

}

Here is our way on simply caching the definition at instance level.

Notice the checking of m_isPrimaryAttributeWasSet variable, if this is set to true already, this means that the call into this method is done already, even the result to the m_primaryAttribute property is null.

We did the same to other definition methods all throughout the class. The actual class can be found here (https://github.com/RepoDb/RepoDb/blob/master/RepoDb/RepoDb/ClassProperty.cs).

And since we know that the call to GetProperties() will only happen once per class after we defined the PropertyCache class, then we are sure that the memory will be minimize here, as we have already removed the recurrent operation on this reflection approach.

We are all set already with the implementation above, however, this was still not enough until we cache the actual activity of the caller (actual project that references RepoDb). With this, we came up an idea to cache the command text.

Caching the CommandTexts

As we know that caching the outside calls would improve a lot the performance of the library as it would actually bypass all the operations we have mentioned above (earlier on this blog).

With this, we first implemented the requests classes as you see below.

The classes can be found here (https://github.com/RepoDb/RepoDb/tree/master/RepoDb/RepoDb/Requests).

The actual class can be found here (https://github.com/RepoDb/RepoDb/blob/master/RepoDb/RepoDb/Field.cs).

Set fetches:

The version of RepoDb by this time is v1.5.3.

The approach above significantly improve the performance of RepoDb, however, we still have the flaws when it comes to memory usage. We are aware that we are heavy with the C# reflection.

Improving the Performance of Reflection

Secondly, we targetted to cache the reflected objects. We aim to make sure that we only call the typeof(Entity).GetProperties() once all throughout the lifetime of the library, per class level.

What we did is we introduce a class named PropertyCache to cache the call per class. Secondly, we added class named ClassExpression to pre-compile the GetProperties() operation via Expression Lambda so the next time we call it, it is already compiled.

public static class PropertyCache

{

public static IEnumerable<ClassProperty> Get<TEntity>(Command command = Command.None)

where TEntity : class

{

...

}

}

We also created a class named ClassProperty that will contain a PropertyInfo object and necessary properties and methods to cache the definition of the property. We implemented the IEquatable<ClassProperty> to make sure that the collection objects can maximize the performance of the comparison.

public class ClassProperty : IEquatable<ClassProperty>

{

...

}

Here is our way on simply caching the definition at instance level.

Notice the checking of m_isPrimaryAttributeWasSet variable, if this is set to true already, this means that the call into this method is done already, even the result to the m_primaryAttribute property is null.

We did the same to other definition methods all throughout the class. The actual class can be found here (https://github.com/RepoDb/RepoDb/blob/master/RepoDb/RepoDb/ClassProperty.cs).

And since we know that the call to GetProperties() will only happen once per class after we defined the PropertyCache class, then we are sure that the memory will be minimize here, as we have already removed the recurrent operation on this reflection approach.

We are all set already with the implementation above, however, this was still not enough until we cache the actual activity of the caller (actual project that references RepoDb). With this, we came up an idea to cache the command text.

Caching the CommandTexts

As we know that caching the outside calls would improve a lot the performance of the library as it would actually bypass all the operations we have mentioned above (earlier on this blog).

With this, we first implemented the requests classes as you see below.

- QueryRequest for Query

- InsertRequest for Insert

- DeleteRequest for Delete

- UpdateRequest for Update

- etc

The classes can be found here (https://github.com/RepoDb/RepoDb/tree/master/RepoDb/RepoDb/Requests).

In every class defined above, it accepts all the parameters the outside calls has in placed. This is to make sure that we are using the passed-values as a key to the uniqueness of the command texts that we are going to cache.

Identifying the Differences of the Parameter Values

We used to override the GetHashCode(), Equals() and implemented the IEquatable<T> interface to override the equality comparer of the following classes.

- All Request Classses

- ClassProperty

- QueryField

- Field

- Parameter

- QueryGroup

It enable us to identify and define the correct equality of the object (internally to RepoDb only).

Inside the library, we forced the equality, let's say the FieldA with name equals to "Name" is equal to the instance of FieldB with name equals "Name" and so forth. The logic is very simple with below's code.

public override int GetHashCode()

{

return Name.GetHashCode();

}

public override bool Equals(object obj)

{

return GetHashCode() == obj?.GetHashCode();

}

public bool Equals(Field other)

{

return GetHashCode() == other?.GetHashCode();

}

public static bool operator ==(Field objA, Field objB)

{

if (ReferenceEquals(null, objA))

{

return ReferenceEquals(null, objB);

}

return objA?.GetHashCode() == objB?.GetHashCode();

}

public static bool operator !=(Field objA, Field objB)

{

return (objA == objB) == false;

}

Caching Process for CommandText

Lastly, we introduced a class named CommandTextCache that holds the cached command text of the caller. See below the implementation of one of the method.

internal static class CommandTextCache

{

private static readonly ConcurrentDictionary<BaseRequest, string> m_cache = new ConcurrentDictionary<BaseRequest, string>();

public static string GetBatchQueryText<TEntity>(BatchQueryRequest request)

where TEntity : class

{

var commandText = (string)null;

if (m_cache.TryGetValue(request, out commandText) == false)

{

commandText = <codes to get the BatchQuery command text>;

m_cache.TryAdd(request, commandText);

}

return commandText;

}

}

The actual class implementation can be found here (https://github.com/RepoDb/RepoDb/blob/master/RepoDb/RepoDb/CommandTextCache.cs).

Let us say, somebody tried to call the repository's Query method as below.

using (var repository = new DbRepository<SqlConnection>(connectionString))

{

{

repository.Query<Person>(new { Id = 10220 });

}

}

The suppose command text is below.

SELECT [Id], [Name], [Address], [DateOfBirth], [DateInsertedUtc], [LastUpdatedUtc] FROM [dbo].[Person];

Inside RepoDb, the method repository Query method has a created a new QueryRequest object with the parameters defined by the caller. In this case is (new { Id = 10220 }).

Then we simply call the CommandText.GetQueryText(queryRequest) get the cached command text.

RepoDb Final Results

There is 2 way of calling the operations in RepoDb, persistent connection and with non-persistent connection. There is 2 way as well on how to do the query, object-based and raw-sql based.

The result below is only for RawSql approach as we have never injected the Object-Based approach. (Note: RawSql is always faster than the Object-Based). This result was personally executed by FransBouma on their Test Environment (with Release binaries version).

The result below is only for RawSql approach as we have never injected the Object-Based approach. (Note: RawSql is always faster than the Object-Based). This result was personally executed by FransBouma on their Test Environment (with Release binaries version).

Individual fetches:

Set fetches:

The version of RepoDb by this time is v1.5.3.

Kindly share your thoughts, comments and inputs, do not forget to tag me if you would like an immediate response. Thank you for reading this blog!